Designing SMS Routing, Retries, and Observability for World Cup Traffic

When traffic spikes during the World Cup, teams don't lose because they "lack SMS." They lose because they lack control: routing that adapts when a market degrades, retry discipline that doesn't amplify incidents, observability that answers incident questions in minutes. This post is a provider-agnostic reference design for SMS-only workloads—built for mission-critical and promotional messaging under burst traffic.

What "Good" Looks Like Under Peak Load

A peak-ready SMS system optimizes for four outcomes:

- Stable delivery by market (country/carrier), not just global averages

- Predictable latency (percentiles) under bursts

- Actionable DLR (delivery receipts that are timely, granular, and trustworthy)

- Graceful degradation when routes degrade (containment, not chaos)

If your current system can't deliver these four outcomes, it will feel "fine" until a major event makes it brittle.

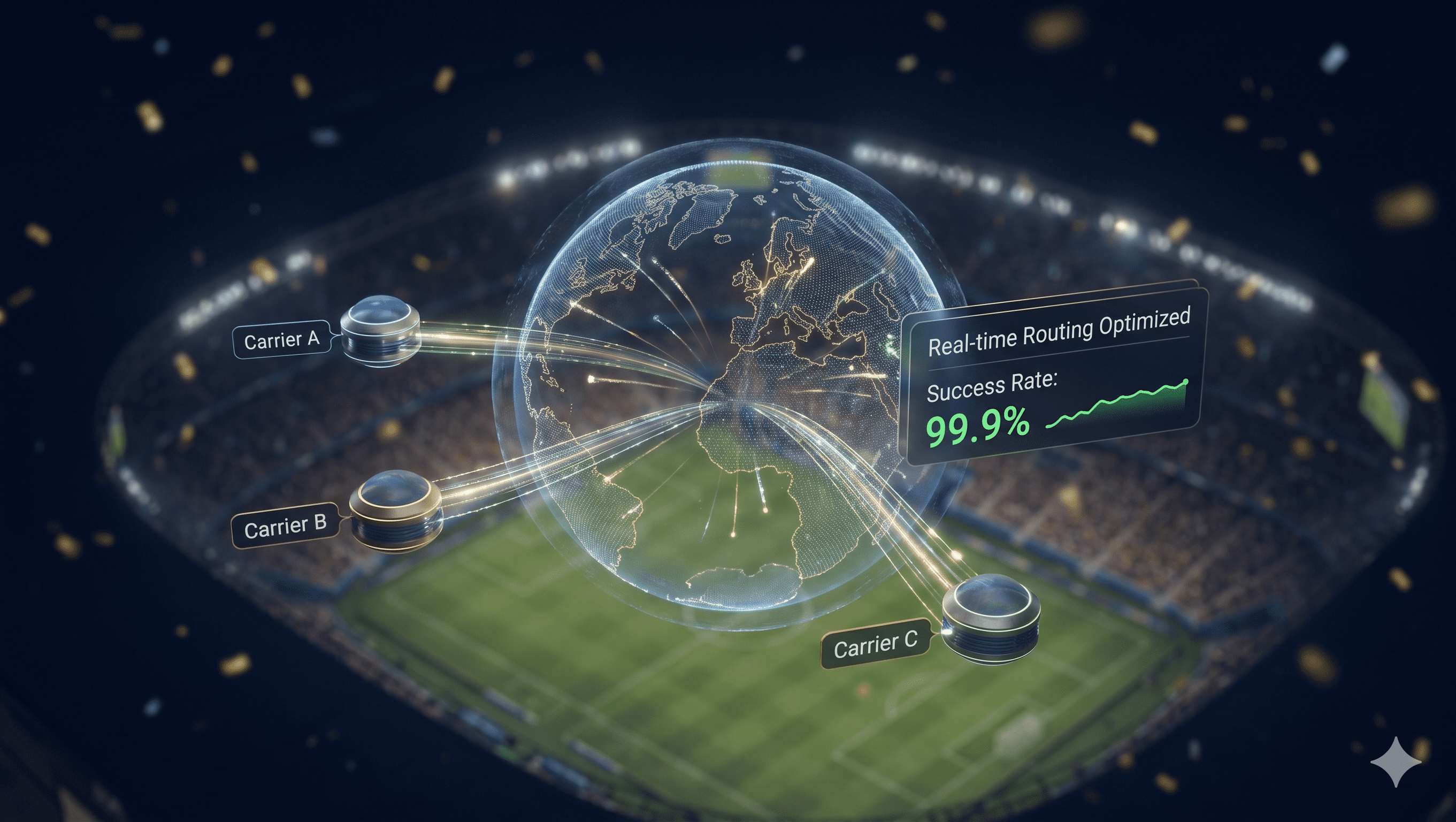

1) Routing: Move from Static Routes to Quality-Aware Routing

Static routing assumes your best path today stays best tomorrow. Peak events break that assumption. According to GSMA's 2025 messaging infrastructure report, carrier performance characteristics shift significantly under high-traffic conditions, with route quality degrading 40-60% faster than baseline measurements suggest.

A Practical Routing Model (The Mental Map)

Think in layers:

- Destination intelligence: country → carrier group → route options

- Policy layer: rules by message class (mission-critical vs promo), region, and time window

- Quality signals: delivery rate, DLR latency, error codes, filtering indicators

- Execution: route selection, failover, controlled retries

If you can't observe your signals, you can't trust your routing decisions.

What Real-Time Intelligent Routing Should Do (Capability Checklist)

Not "AI." Not magic. Just measurable behavior:

- ✓ Detect early degradation (before complaint volume)

- ✓ Shift traffic without thrashing (avoid constant oscillation)

- ✓ Respect governance constraints (sender identity, template rules)

- ✓ Preserve reporting continuity (so the team can still diagnose outcomes)

Failover Patterns That Work in the Real World

1 Hot Failover

Hot Failover — Best for: mission-critical SMS where delays are expensive. Risk: overreacting to noise if thresholds are poorly tuned.

2 Canary Shifting

Canary Shifting — Best for: promotional traffic, or when partial degradation is suspected. Risk: slower to fully recover if a route is truly down.

3 Market Isolation

Market Isolation — Best for: global sends where one market is unstable. Risk: requires clean segmentation and route-level reporting.

A mature system supports more than one pattern—because peak incidents don't all look the same.

2) Retries: The Fastest Way to Turn a Minor Delay into a Major Incident

Retries feel safe—until peak load. Under burst traffic, aggressive retries:

- Multiply volume at the worst moment

- Increase filtering risk (repeated patterns)

- Inflate costs

- Worsen queues and latency

According to Twilio's engineering research on message delivery optimization, retry amplification during traffic bursts can increase message volume by 3-5x, directly correlating with increased filtering rates and cost overruns.

What "Retry Discipline" Means

A good retry strategy is:

- ✓ Bounded (caps exist)

- ✓ Backoff-based (not immediate repeat sends)

- ✓ Route-aware (don't retry on the same failing path)

- ✓ Error-aware (some failures should not be retried)

An Error-to-Action Matrix (How On-Call Teams Should Think)

You don't need perfect error taxonomy. You need actionable buckets:

- Route degradation suspected → Shift traffic (hot failover or canary)

- Throughput/limit suspected → Throttle and protect mission-critical traffic

- Content/governance suspected → Pause affected campaign, roll back templates

- Unknown/timeout → Limited retry + monitor DLR latency changes

Key Point: The goal is to prevent "retry everything" from becoming your default incident response. During peak events, indiscriminate retries are one of the most common causes of cascading failures.

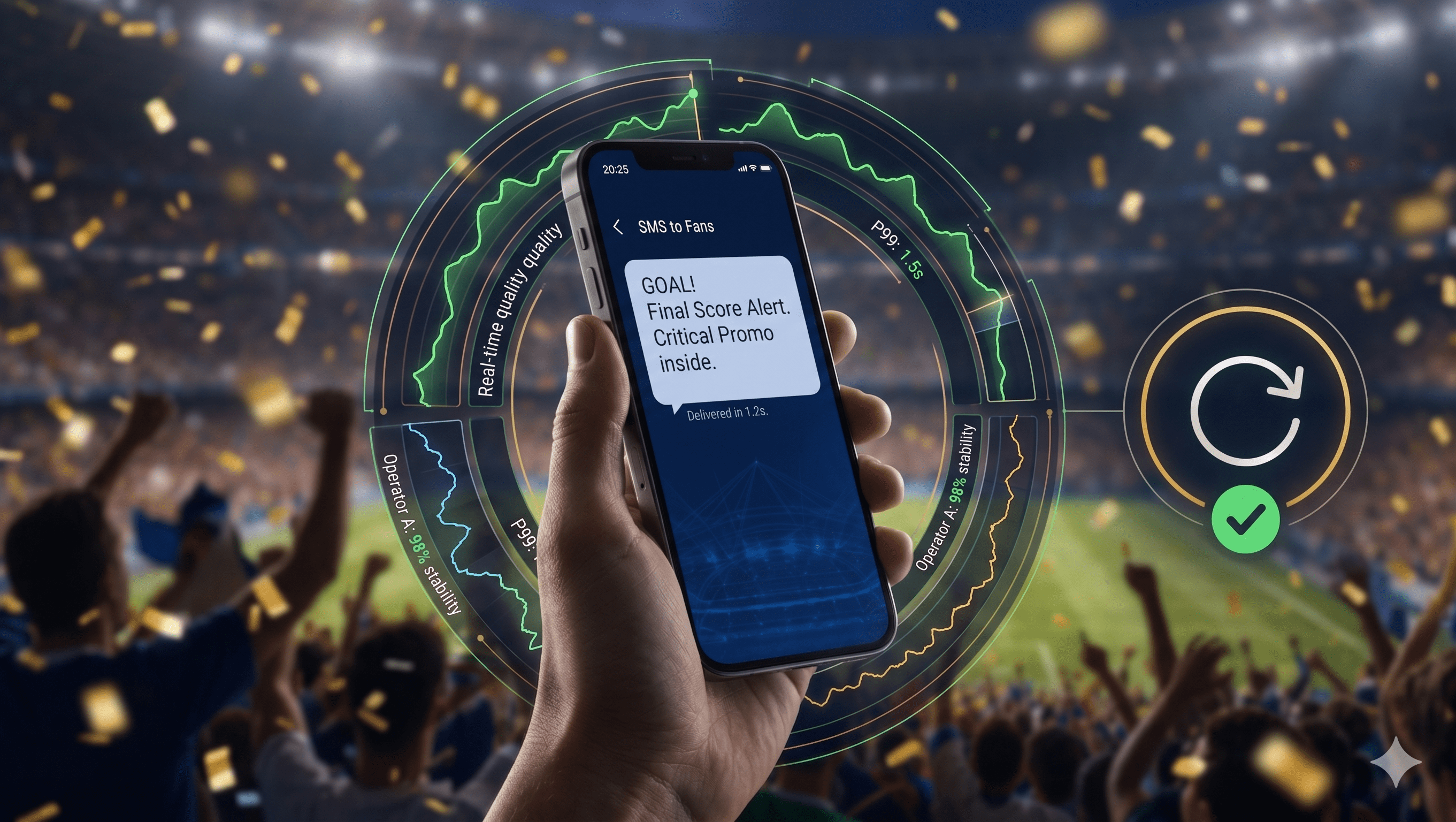

3) Observability: Your Peak Dashboard Must Answer 5 Questions

During match windows, the dashboard must be a decision tool—not a vanity chart. Industry data from Sinch's 2025 messaging reliability study shows that teams with pre-defined incident question frameworks resolve issues 4x faster than those without structured triage.

The 5 Incident Questions

- Is this global, or isolated to a market/carrier?

- Is it a delivery problem, a latency problem, or a DLR reporting problem?

- Is it routing-related, or campaign/template-related?

- Which message class is impacted (mission-critical vs promo)?

- What action will measurably improve outcomes in the next 30 minutes?

The Minimum Slices You Need

At minimum, you need:

- Delivery and latency percentiles by country/carrier/route

- DLR completeness + DLR latency distribution

- Error codes over time (top N by market)

- Queue depth and backlog drain time

- Segmentation by message class (mission-critical vs promo)

If you can't slice by route, you won't be able to triage effectively.

Alerting: Alert on Changes, Not Just Low Averages

Peak incidents are often detected as shifts:

- DLR freshness suddenly worsens

- One carrier's delivered rate drops

- Promo filtering spikes after a template change

Alerts should be market- and class-aware. Global alerts are often too noisy to be useful.

4) The Peak Runbook (Copy/Paste)

Peak readiness is mostly operational. The following runbook provides a structured approach for World Cup-scale traffic events.

Before a Match Window

- ✓ Confirm routing policies and thresholds

- ✓ Verify dashboards and alert channels

- ✓ Freeze last-minute template changes for major promos

- ✓ Confirm decision rights: who can throttle or pause campaigns if mission-critical metrics degrade

During an Incident

- ✓ Identify affected markets/carriers first

- ✓ Check DLR freshness (is the problem real delivery, or reporting lag?)

- ✓ Choose one action path: reroute (hot failover or canary shift), throttle to protect mission-critical traffic, pause the promotional campaign if filtering spikes

After the Incident

- ✓ Document which routes degraded and which failover pattern worked

- ✓ Update thresholds and routing rules

- ✓ Improve template governance where needed

Where EngageLab SMS Fits (A Concrete Example to Evaluate)

This blueprint is provider-agnostic, but it maps directly to the capabilities teams look for when they run a World Cup peak POC. EngageLab SMS is designed to support:

- Real-time intelligent routing based on channel quality monitoring

- 99%+ ultra-high deliverability positioning with global multi-node infrastructure

- High-concurrency support for promotional bursts

- Rich-text templates to keep campaigns consistent under pressure

- Automation + seamless integration so teams can implement controls without heavy operational overhead

- 24/7 operational support for peak windows

Next Steps

If you want to validate routing, retries, and observability against your own traffic:

Whether you're running promotional campaigns tied to match moments or mission-critical notifications during peak traffic, EngageLab SMS provides the routing intelligence, retry discipline, and observability needed to deliver reliably when it matters most.

Frequently Asked Questions

What is SMS intelligent routing and why does it matter during peak traffic events?

SMS intelligent routing dynamically selects carrier paths based on real-time conditions rather than static configurations. During World Cup peak events, traffic spikes of 300-500% above baseline can cause route degradation within minutes. According to GSMA's 2025 messaging infrastructure report, static routing configurations fail 40-60% more often during peak events compared to intelligent routing systems.

Intelligent routing monitors delivery rates, DLR latency, and error codes to automatically shift traffic when routes degrade—before complaint volume increases. This reduces delivery failures and ensures mission-critical messages continue flowing during high-concurrency periods.

How do SMS retry strategies affect peak traffic performance?

SMS retry strategies can either stabilize or destabilize your system under peak load. According to Twilio's engineering research on message delivery optimization, aggressive retry policies during traffic bursts can amplify volume by 3-5x, increasing filtering risk, cost overruns, and queue congestion.

Effective retry discipline requires: bounded retry caps to prevent volume amplification, exponential backoff to avoid hammering failing routes, route-aware logic to avoid retrying on the same failing path, and error-aware classification where some failures (governance/content issues) should not trigger retries at all. The goal is to prevent "retry everything" from becoming your default incident response.

What are the three main SMS failover patterns for peak events?

Three proven SMS failover patterns for peak traffic:

(1) Hot Failover—switch quickly when thresholds break, best for mission-critical SMS where delays are expensive (risk: overreacting to noise if thresholds are poorly tuned);

(2) Canary Shifting—move 5-10% of traffic first, then ramp, best for promotional traffic or when partial degradation is suspected (risk: slower to fully recover if route is truly down);

(3) Market Isolation—contain a bad route to prevent blast radius expansion, best for global sends where one market is unstable (risk: requires clean segmentation and route-level reporting).

A mature SMS system supports more than one pattern because peak incidents don't all look the same.

What are the 5 critical observability questions for SMS incident response?

During match windows, your SMS dashboard must answer 5 incident questions in minutes:

(1) Is this global, or isolated to a market/carrier?

(2) Is it a delivery problem, a latency problem, or a DLR reporting problem?

(3) Is it routing-related, or campaign/template-related?

(4) Which message class is impacted (mission-critical vs promo)?

(5) What action will measurably improve outcomes in the next 30 minutes?

Industry data from Sinch's 2025 messaging reliability study shows that teams with pre-defined incident question frameworks resolve issues 4x faster than those without structured triage. Without these slices, you cannot triage effectively or communicate incident scope to stakeholders.

What minimum metrics should an SMS peak traffic dashboard include?

At minimum, your SMS peak traffic dashboard needs: delivery and latency percentiles by country/carrier/route, DLR completeness and DLR latency distribution, error codes over time (top N by market), queue depth and backlog drain time, segmentation by message class (mission-critical vs promo). If you cannot slice by route, you cannot triage effectively.

According to AWS's SMS delivery optimization guide, DLR lag increases 200-400% during carrier congestion periods, making real-time DLR freshness monitoring critical.

Alert on changes, not just low averages—peak incidents are often detected as shifts: DLR freshness suddenly worsens, one carrier's delivered rate drops, or promo filtering spikes after a template change.

For more information about EngageLab's SMS solutions, visit https://www.engagelab.com/sms. To start testing SMS routing, retries, and observability for your peak traffic scenarios, create a free account or contact our sales team.